GEO (Generative Engine Optimisation) is how your brand shows up inside ChatGPT, Claude, Perplexity, Gemini and Copilot. Most Australian brands are not there yet. The ones who start now will compound. The ones who wait twelve months will find it increasingly hard to catch up.

What follows is the 30/60/90 day plan we run for our own client programmes at ClickedOn. It is written for marketing managers, not specialists, and it assumes one person leading with occasional input from a developer or agency. If you only have ten minutes today, skip to the Days 1-30 section. That is where it starts.

The current state of play in Australia

Open ChatGPT. Ask it to recommend the top five companies in your category in Australia. Do the same in Perplexity, Claude and Google AI Mode. If your brand is not in the answer, that is your baseline. It is also the conversation your buyers are having right now.

We run these prompts across client accounts every week, and the pattern is consistent. In most B2B and mid-market B2C categories in Australia, one or two brands dominate the AI answer set. A second tier gets mentioned inconsistently. Everyone else is invisible.

The dominant brands are not always the largest or the best known offline. They are the ones whose content is structured for extraction, who are cited on trusted third-party sites, and whose technical foundations let AI crawlers read them cleanly.

The harder part is that the gap is compounding. Each citation earned on a credible source (the ABC, SMH, a respected industry publication) feeds the authority signal that makes the next citation more likely. SE Ranking's analysis of 2.3 million pages found that high-authority domains earn roughly 3x more AI citations than lower-authority peers. Previsible's 2025 AI Traffic Report tracked a 527% year-on-year rise in AI-referred sessions. The leaders are not just ahead. They are advancing faster.

Why catching up gets harder the longer you leave it

GEO behaves more like brand building than paid media. You cannot turn it on overnight. The three things that move the needle (structured answer-first content, domain authority through third-party citations, and content freshness) all take months to accumulate, and none of them can be shortcut with budget alone.

AI traffic is not huge today, and there are real complications with measuring it. In our client data it sits at around 1% of captured sessions, but it accounts for up to 10% of traffic impact and between 10% and 15% of leads once you factor in assisted conversions. These trends are going in one direction, which is up. Gartner projects traditional search volume will drop 25% by the end of 2026 as AI-mediated discovery takes more share. That share is not disappearing. It is moving into AI answers. The question is whether your brand moves with it.

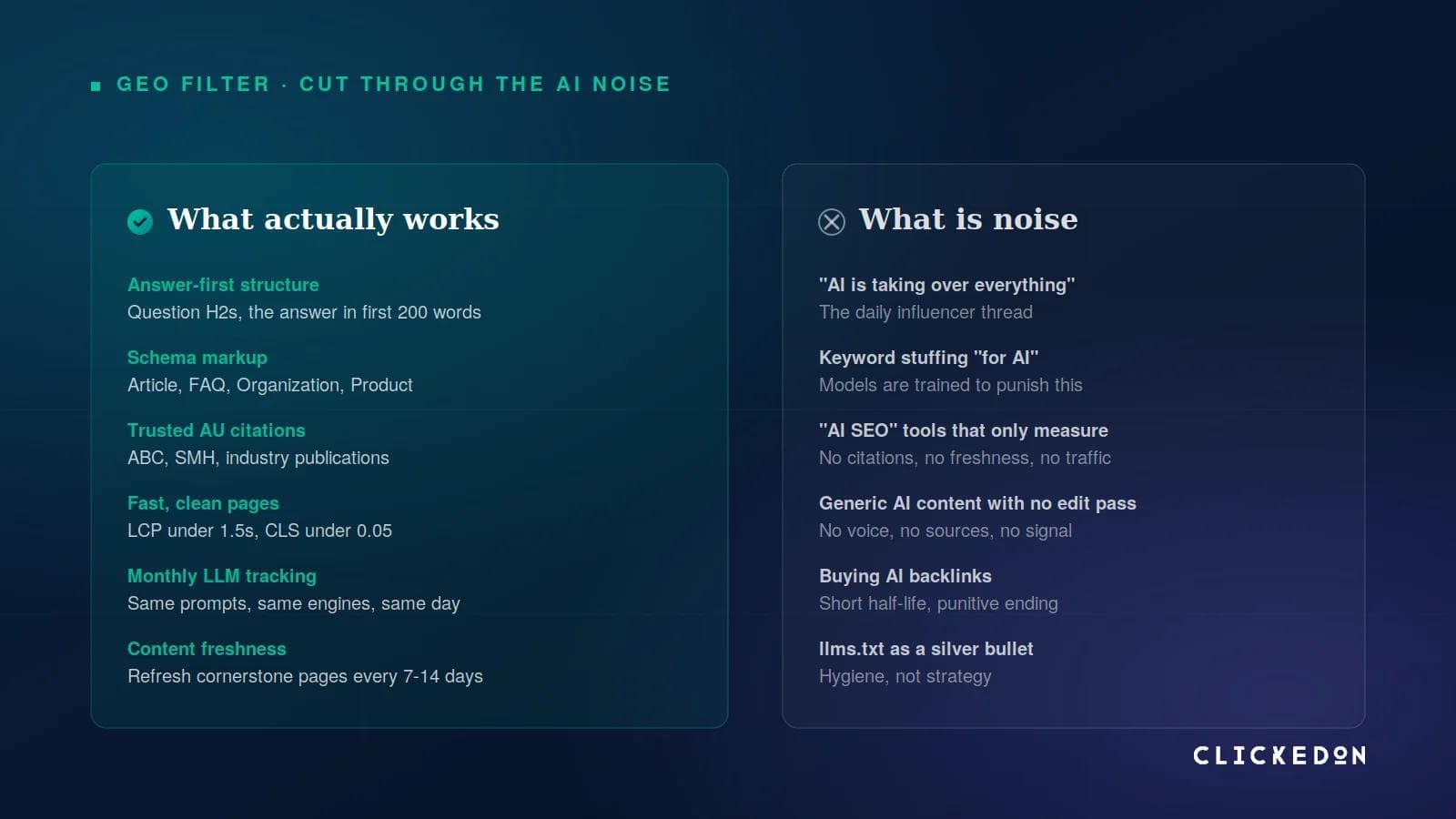

What actually works, and what is noise

Before we get to the 90-day plan, a quick filter on what cuts through. There is a lot of AI-era marketing noise right now, and most of it is not worth your time or your budget.

What actually works

- Answer-first content structure, with question-format H2 headings and the direct answer in the first 200 words. The test is simple. What is the soundbite you would say to a friend at a BBQ? Put that at the top. That is answer-first.

- Schema markup (Article, FAQPage, Organization, Product) that tells AI engines what the page is. It is the technical layer, but without it the LLM reads your content the way you would read a page written in Greek.

- Third-party citations on trusted Australian publications and industry sources. Authority still matters, and it will matter more as generic content becomes trivial to produce.

- Fast, clean pages that load quickly. Target Largest Contentful Paint (LCP) under 1.5 seconds and Cumulative Layout Shift (CLS) under 0.05. Google's free PageSpeed Insights tool will check any page.

- Monthly tracking of brand mentions and sentiment across the five major AI engines.

- Content refreshed every 7 to 14 days on your cornerstone pages. Most GEO-driven recommendations we see come from content written in the last thirteen weeks. Freshness is a core signal, and a core deliverable.

What is noise

- The influencer telling you AI is taking over everything, plus the daily thread of "new" ways to work with LLMs.

- Keyword stuffing "for AI". The models are trained to punish this.

- "AI SEO" tools that only measure without doing anything to improve citations, content freshness, or AI-influenced traffic.

- Generic AI content generation with no brand voice, no sources, and no edit pass.

- Buying AI backlinks. Same half-life as buying regular backlinks, which is to say short and punitive.

The 90-day plan below focuses entirely on the first list.

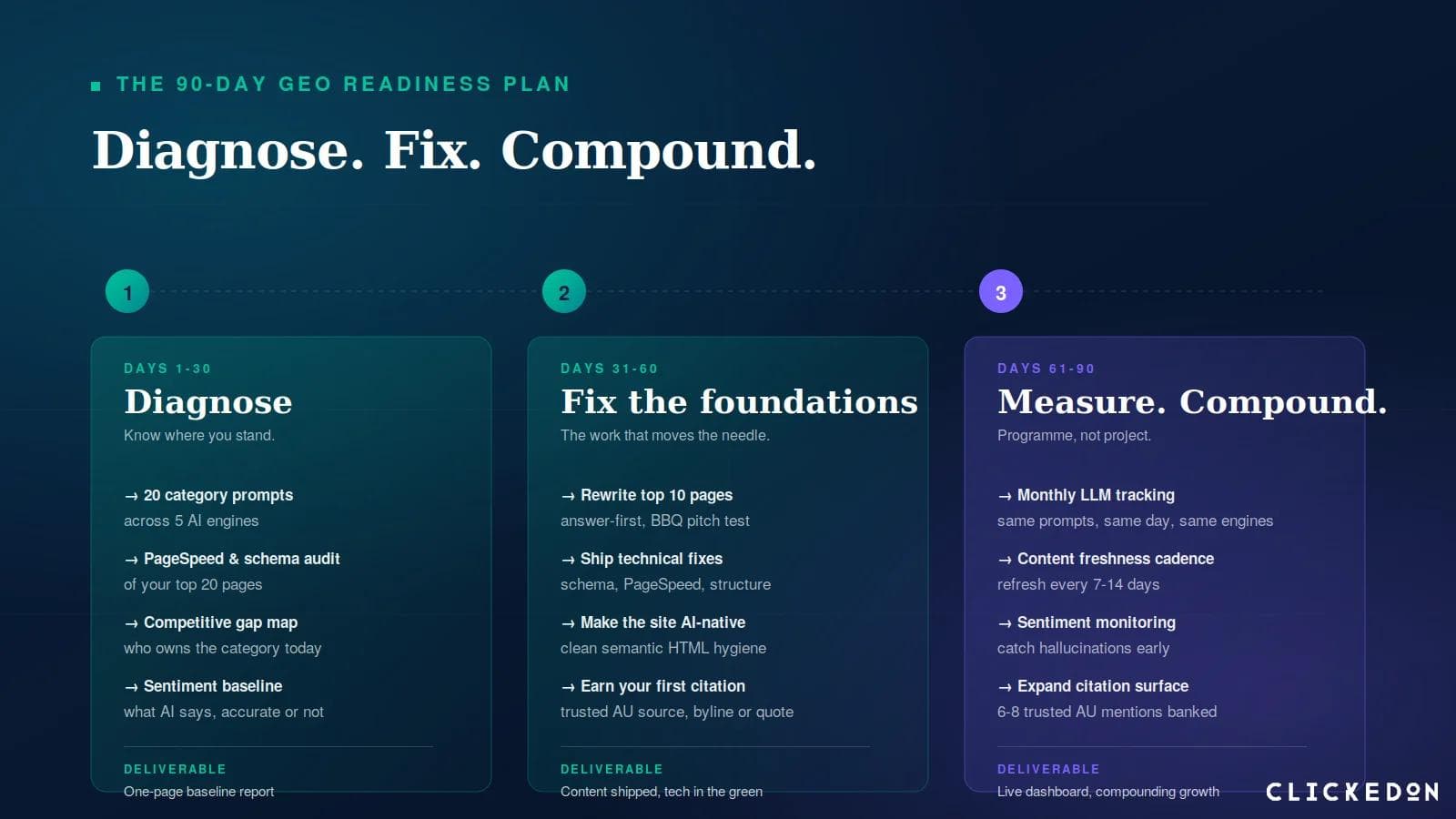

The 30/60/90 day GEO readiness plan

This plan assumes one marketing manager leading, with occasional input from a developer or agency. The goal at the end of ninety days is a measurable GEO programme running and improving, not a complete transformation.

Days 1-30: know where you stand

The first month is about building a baseline. Measure where you are, what you can track, and where the gaps sit.

Run brand prompts across the five major AI engines. Pick twenty prompts a buyer in your category would actually type. Category queries ("best commercial solar installers in Sydney"), comparison queries ("my brand vs my competitor's brand"), and problem queries ("how do I reduce my company's energy costs"). Run each one in ChatGPT, Claude, Perplexity, Gemini and Copilot. Record who is cited, in what position, and with what framing. That is your share-of-AI-voice baseline.

LLMs are trained to deliver personalised results, which makes these baselines harder than old SEO baselines. We test each prompt fifty times a day through LLM probing, and then give a weighted estimate. That is where the data becomes statistically significant and trustworthy.

Audit your technical foundation. Run your top twenty pages through PageSpeed Insights. The targets that matter for AI crawlers and for user experience are LCP under 1.5 seconds and CLS under 0.05 on mobile. Anything slower, and you are losing both AI citations and Google rankings, because the two systems share infrastructure. Check each page has a canonical URL, a unique title, a meta description, and at least one relevant JSON-LD schema type.

Map the competitive gap. From your prompt testing, identify the two or three brands already winning your category inside AI answers. Look at their content. How are their H2s structured? Are their cornerstone pages question-format? Do they have FAQ schema? What third-party sites cite them? That is your benchmark and your target list. Run them through PageSpeed Insights alongside your own pages.

Establish a sentiment and accuracy baseline. For every prompt where your brand is mentioned, note the framing. Is it positive, neutral, or negative? Is the claim accurate, or is the model hallucinating an outdated statistic, the wrong leadership team, or a product you no longer offer? Sentiment and accuracy are your quiet reputation risk. If ChatGPT is confidently telling 10,000 buyers a month that your business closed in 2022, that is the first thing to fix.

Deliverable at day 30: a one-page GEO baseline report with share-of-voice scores, PageSpeed and schema gaps, competitor benchmarks, and a sentiment log. Take it to your leadership team. It is the business case for the next sixty days.

Days 31-60: fix the foundations

Month two is where the real work happens. With the baseline in hand, the fixes become obvious and prioritised.

Rewrite your top ten pages as answer-first content. These are your highest-traffic or most commercially important pages. Typically that is your homepage, two or three category pages, and six or seven cornerstone service or product pages. For each, rewrite the first 200 words to answer the primary query directly, in the same language a buyer would use. Convert three or four H2 headings into question format. Add an FAQ section with four to six questions drawn from your actual sales calls and support tickets. This is the single highest-impact change in the plan, and it is almost entirely a copy exercise. Our dedicated answer engine optimisation service can run this workflow at pace if you want it done in weeks rather than months.

Ship the technical fixes. From the day-30 audit, prioritise three categories. First, PageSpeed (compress images, defer non-critical JavaScript, preload the hero image). Second, schema markup (Article on blog posts, FAQPage on service pages, Organization on the homepage and about page, Product where relevant). Use our free schema generator to produce valid JSON-LD. Third, content structure (canonical URLs on every page, a clean H1, meta descriptions under 155 characters). Most of this is a two-week developer sprint, not a replatform.

Make the site AI-native. This sounds technical but it is mostly hygiene. Clean, semantic HTML (real <article>, <section>, <h2> tags, not divs). Images with alt text. Internal links between related pages. A clear, crawlable URL structure. AI engines parse the same HTML that Google does. A site that is easy for Googlebot to read is easy for the AI crawlers too.

Worth noting: if your current site is slow or built on legacy infrastructure, the effort needed here can quietly become a replatform. We have been moving client sites onto an AI-native baseline codebase that prioritises load speed, semantic HTML, and native AI-ready infrastructure over redesign. It is not a new look. It is the same site, faster and more machine-readable. The transfer typically runs in days rather than months, and costs a fraction of what a full rebuild did even a year ago.

Earn a citation. Pick three Australian industry publications in your category, and pitch a guest byline, a quote for an upcoming piece, or a data-led story using your internal numbers. One earned citation on a trusted AU source is worth more than twenty on low-authority sites. This work is slow. Start it now so the authority compounds through month three and beyond. Our brand citations for AI service runs this workstream as a managed programme.

Deliverable at day 60: cornerstone content rewritten, schema deployed, PageSpeed in the green, and two or three citation conversations in flight. Rerun the baseline prompts from month one. You should see small movement.

Days 61-90: measurement and compounding

Month three is where GEO becomes a programme rather than a project. The work shifts from one-time fixes to a rhythm you can sustain.

Stand up monthly LLM tracking. The baseline prompts from day 30 become a monthly test set. Run them in the same order, on the same day each month, across the same five AI engines. Track whether your citation rate is rising, which engines are citing you more, and which prompts you still lose. This is how you prove GEO is working, or identify where it is not. You can run a manual version in a spreadsheet. Purpose-built platforms automate the tracking across ChatGPT, Claude, Perplexity, Gemini and Copilot (our own Geo tool is one, and there are several others worth looking at). Our free AI Visibility Audit gives you a snapshot in under a minute without any sign-up.

Build a content freshness cadence. Commit to refreshing your top ten pages every 7 to 14 days. Refreshing does not mean republishing. It means adding a new stat, a new FAQ, a new section, a new date stamp. AI engines prefer recent content, and so do your buyers. Build it into the content calendar the same way you build in newsletter sends.

Set up brand sentiment and accuracy monitoring. Every month, note the framing and the factual accuracy of your brand's AI mentions. When you find a hallucination (a wrong stat, an outdated product, a confused leadership team), the fix is usually upstream. Your own content needs to state the correct fact clearly, ideally on a page the AI engines already trust. Correcting one hallucination on your homepage will ripple through AI answers within weeks.

Expand the citation surface. With two or three earned citations banked in month two, double the target list in month three. Aim for six to eight trusted Australian sources mentioning your brand by name, with or without a link. Unlinked brand mentions count for GEO in a way they never did for SEO. This is the single most under-priced marketing tactic in Australia right now.

Deliverable at day 90: a monthly GEO tracking dashboard, a content freshness calendar, a running citation list, and a sentiment log. The baseline prompts from day 30 should show your brand moving into AI answers it was absent from. The compounding has started.

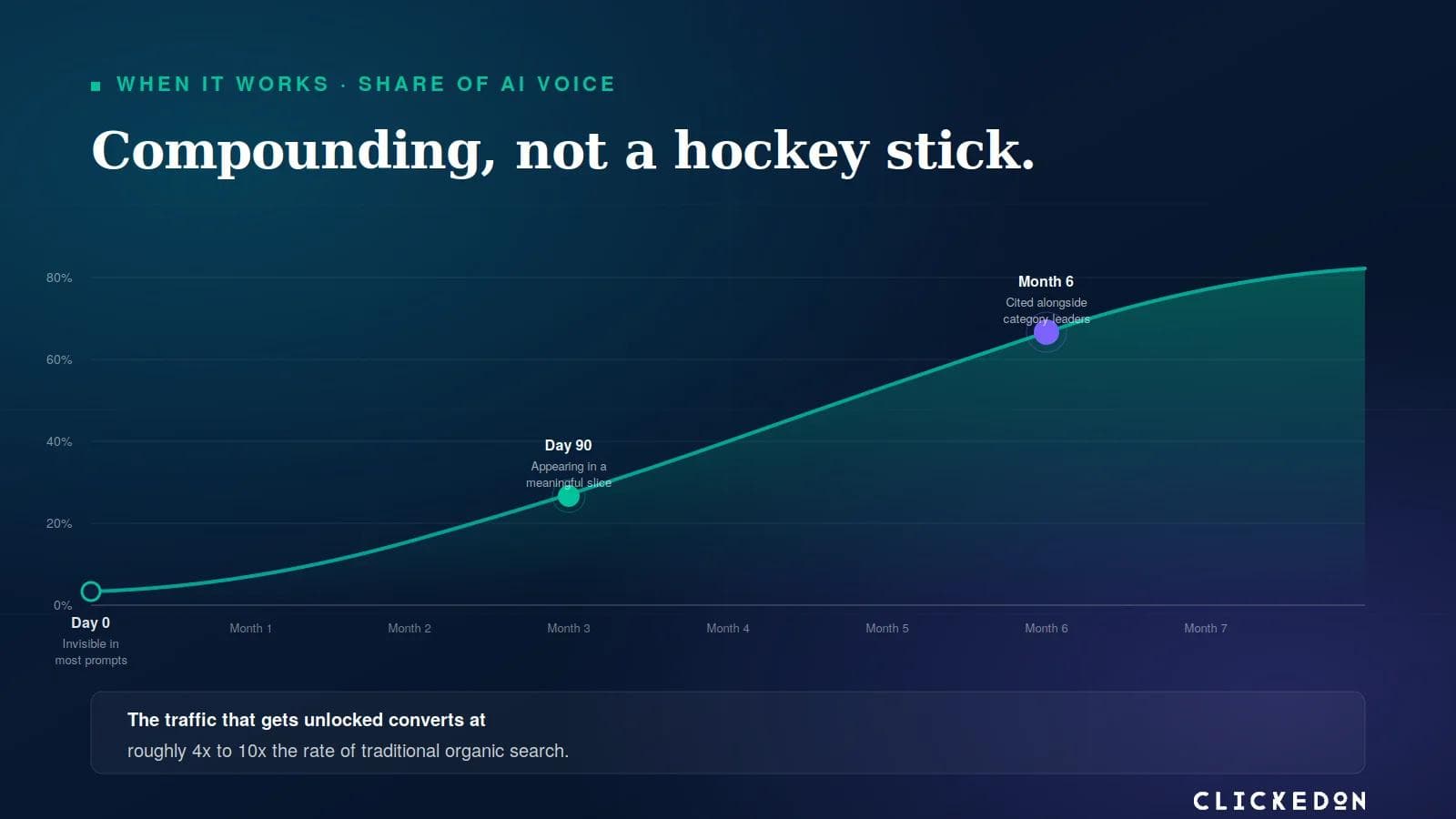

What this looks like when it works

The pattern we see across client programmes is steady, compounding progress rather than a hockey stick. Brands typically move from being absent in the majority of category prompts at day zero, to appearing in a meaningful slice by day ninety, to being cited consistently alongside category leaders by month six. None of the gains come from paid media. All of it comes from answer-first content, schema hygiene, PageSpeed fixes, and a disciplined monthly cadence of citation earning and content refresh.

The AI traffic that gets unlocked converts at roughly 4x to 10x the rate of traditional organic, in line with the 4.4x lift Previsible reported across their client set and what we see across our own benchmarks. That is the number that makes GEO a board-level conversation rather than a specialist SEO project.

Frequently asked questions

What is GEO and how is it different from SEO?

GEO (Generative Engine Optimisation) is the practice of earning brand citations and recommendations inside AI answers from ChatGPT, Claude, Perplexity, Gemini and Copilot. SEO targets the ten blue links. GEO targets the AI-generated response that increasingly sits above them. The foundations overlap (around 76% of URLs cited inside Google AI Overviews also rank in the top 10 organic results), but the optimisation targets (answer-first structure, citation authority, freshness, multi-engine presence) are different enough that a dedicated GEO programme is now table stakes.

How long does a GEO programme take to show results?

The 90-day plan above is the typical timeline for a small business or a one-person marketing team running the programme themselves. With an agency partner running it full-time, we see measurable citation lift in 30 to 60 days, and consistent inclusion in category answer sets by month three or four. Full compounding (being cited alongside category leaders by default) typically takes six to nine months.

Do I need to be on every AI engine, or can I pick one?

Optimising for ChatGPT alone leaves citations on the table. ChatGPT is the biggest engine by volume, Perplexity is growing faster, Google AI Overviews touch a large slice of classic Google traffic, and Claude is heavily used in B2B research. The good news is that most of the work transfers across engines. Answer-first content, clean schema, domain authority and content freshness move the needle everywhere. The last 10% is engine-specific.

Is llms.txt a silver bullet for GEO?

No. llms.txt is a nice-to-have signal, not a ranking factor. Adding one does not make your content extractable, authoritative, or fresh. Spend your time on answer-first structure, schema markup and citation building first. Add llms.txt at the end as hygiene, not as a strategy.

How do I measure AI-referred traffic inside GA4?

Set up referral tracking for chatgpt.com, perplexity.ai, claude.ai and gemini.google.com. Tag campaigns so you can attribute AI-sourced conversions. Replay your baseline prompts monthly and record citation share. Expect AI-referred sessions to be a small fraction of total traffic today (around 1% of sessions in our client data), but to convert at 4x to 10x the rate of classic organic.

What should I do if ChatGPT is citing outdated or incorrect information about my brand?

Fix the upstream source. Restate the correct fact clearly on a page the AI engines already trust (usually your homepage or about page), add matching schema, and earn a fresh citation on an authoritative third-party source. Most hallucinations clear within four to eight weeks once the corrected signal is in place.

Key takeaways

- GEO is how your brand appears inside ChatGPT, Claude, Perplexity, Gemini and Copilot, and most Australian enterprise brands are under-indexed today.

- The gap compounds. Leaders earn citations that feed authority that earns more citations, which is why waiting twelve months is expensive.

- What actually works: answer-first content, schema markup, third-party citations on trusted AU sources, fast clean pages, monthly tracking across the five major AI engines.

- What is noise: llms.txt as a silver bullet, "AI keyword stuffing", and AI SEO tools that only measure.

- The 90-day plan is diagnose (days 1-30), fix (days 31-60), measure and compound (days 61-90).

- AI traffic converts at roughly 4x to 10x the rate of classic organic, which is why the business case does not depend on absolute traffic volume.

Where to start

If the 90-day plan is enough for you to run in-house, run it. That is a good outcome. Print the three headers onto a single page, stick it on the wall, and work through it.

If you want a sharper starting point, the free GEO Readiness Checker on our site runs the technical and content audit from the Days 1-30 section in about a minute. No sign-up. It gives you the gaps. What you do next is your call.

And if at some point the scale of the citation work, the tracking cadence, or the cornerstone content rewrite is larger than what you can run internally, that is usually the right time to bring in a partner. We have been running GEO as a managed programme for Australian enterprise clients since early 2025, and we are a Google Premier Partner (top 3% of agencies in Australia, five years running). Have a look at what we do, or send us a note.

The point is not the tool or the partner. The point is that your brand gets on the field before the rest of the category locks in the citations that matter.